This is second in the series #LaunchWithAI covering Vitra.ai. Do you want to share your story with the greater startup community? Send those our way here!

Helping quality content reach the linguistically diverse global market is a challenge that Vitra.ai decided to solve using AI. Co-founder Satvik Jagannath saw that businesses often had great content—videos, images, podcasts, and text—but what they needed was more reach, a way to break into global markets, expand their presence and increase their revenue using content they already had, instead of reinventing the wheel.

As Satvik explains, “Everyone creates world-class content today, but where everyone struggles is when it comes to the content reach. After three years of research, I concluded that language is the barrier.”

The idea was to use natural language processing (NLP) to remove those barriers with lightning-fast, context-sensitive translation. His team initially chose a technology stack based on a combination of DIY components, providing the greatest degree of granular control.

But after a flurry of initial success, Vitra.ai’s in-house AI solution started facing issues. Scaling their solution was proving harder than they had foreseen, until they discovered a new way to turn around a tough situation and reap the full benefits of AI while achieving cost savings of 22%.

Adopting AI in the cloud for translation

Vitra.ai’s successful initial model gained a very high satisfaction rate among early customers. The company’s platform, which provided AI-based translation 100x faster than older solutions, saving costs and offering greater improvements with training over time.

But when it came time to scale their solution to meet demand, Vitra.ai’s team found there was too much operational overhead to manage multiple custom APIs and their solution’s complex infrastructure.

Originally, Vitra.ai was running in-house custom models on a multi-cloud architecture. Satvik admits that even from the beginning, this was time-consuming and cost-intensive, taking three to six months to train the models to the point where they were able to provide high accuracy in translation.

But once the platform started to take off, they ran into trouble. As Vitra.ai gained customers, and demand increased, they found it difficult to keep their system up and running, scaling to keep pace with their customers’ needs.

In one sense, the business was thriving: the models worked, and customers were reaping the benefits. But the workload for Satvik’s teams was unmanageable, cutting into their margins.

“We couldn’t manage these models,” Satvik says. “The infrastructure complexity was just scaling up. We had to manage so many services across multiple places, causing network latency and debugging nightmares.”

Vitra.ai needed to pivot quickly before these problems impacted their customer experience.

Roadblocks with multi-cloud and DIY infrastructure

Satvik admits, looking back, that while in-house development may appeal to startups, it isn’t always the most efficient solution.

Vitra.ai’s platform was originally built on an in-house developed multi-cloud architecture, with services like GPU, CPU/compute, applied AI, text services, and more, from various cloud providers. However, using multiple providers proved cumbersome at scale, leading to bottlenecks and problems with tracing and debugging.

“The system was becoming impossible to manage,” says Satvik. “Suddenly, you had a customer who wanted to use hundreds of hours; they wanted the output over a period of three hours, and you have to figure out how to scale for that period of time.”

The platform’s complex and distributed architecture, based on GPU and CPU instances, all running at 100% utilization, made a load balancer approach ineffective. Though autoscaling helped, costs were still out of control. Vitra.ai often ended up over-provisioning instances simply to cater to spikes, further cutting into margins.

They began looking around for a better solution that would let them provide enterprise-grade AI while saving costs. They discovered that applied AI services offered ready-made text-to-speech solutions powered by AI and NLP, which could be the best way forward for Vitra.ai.

The solution: Sharing the workload with Azure Cognitive Services

In refactoring their platform, Vitra.ai’s goals were to decrease latency for a better customer experience, make debugging easier, and save on cloud costs. It was essential to achieve all of that without impacting the platform’s speech-to-text accuracy rate.

Looking around for a solution, Vitra.ai ran a pilot with a range of APIs for a dozen leading speech-to-text systems, including A/B testing. This careful process gave them plenty of data to compare the various platforms’ performance.

The results based on data and graphs were very exciting, offering major gains in model performance. In this initial proof of concept, Azure Cognitive Services provided an overall accuracy rate of 80%, with 30% improvement on punctuation accuracy over Vitra.ai’s own custom models.

Satvik says going with Azure Cognitive Services was an easy decision. “It wasn’t just one factor that helped us make this decision,” says Satvik. “Hundreds of in-house tech experts and consultants assessed all the solutions’ speed, translation accuracy, and overall cost before making the decision.”

Integrating their platform with Azure Cognitive Services involved a simple API integration, which Satvik says took barely half an hour, compared to potentially months or even years of custom in-house development time.

“When you start off as a startup, you want to do everything,” Satvik says. “Then you realize that offloading everything you don’t want to do at scale will actually yield results.” Results like saving costs, cutting latency, and vastly improving scalability.

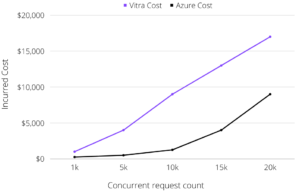

“Using Azure Cognitive Services saved us a lot,” says Satvik. “We didn’t have to provision anything; it was a pay-as-you-use model. We just paid when we used the service, and it was almost 2.5x more cost-effective than running our own infrastructure.”

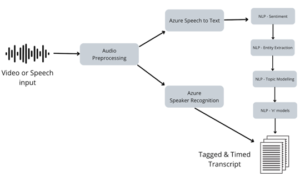

Today, Vitra.ai is using a combination of their own custom natural language processing (NLP) models alongside Azure Cognitive Speech Services for AI. “Even if you want to build something custom,” Satvik says, “you can bring your own data, and train on top of Azure’s existing models.”

Azure Cognitive Services help answer the “what” and the “who” of speech, while Vitra.ai’s custom NLP services answer the “why” and the “how,” completing the complex puzzle of speech recognition and translation.

With Vitra.ai’s new, streamlined solution in place, there were immediate benefits:

- CSAT score increase of 23%

- Better language processing, including 30% improved punctuation placement

- Immediate cost savings, cutting overall spend by 22%

Using APIs for AI has let Vitra.ai cut operational expenses along with internal R&D, allowing them to redirect the money they’re saving to platform innovation, creating and refining features to better meet customers’ needs.

Applied AI offers a wide range of benefits to businesses of all sizes, but particularly for startups with a great use case for AI but who are experiencing delays in specialized data engineering, or growing pains when they need to scale their solution.

“As a startup, you have to start fast, go to market fast,” says Satvik. “Partnering with Azure Cognitive Services has let Vitra.ai continue to grow and profit in a fast-paced market, meeting the growing demand for global reach with one-click translation for the widest possible range of content.”

Deploy a translation service today. If you’re a member of Microsoft for Startups Founders Hub, you can create your Speech Service within five minutes.